Steward is an investigation into the concept of stewardship, employing a procedurally generated terrain, slowly decaying over time.

A Guide To Steward

Description

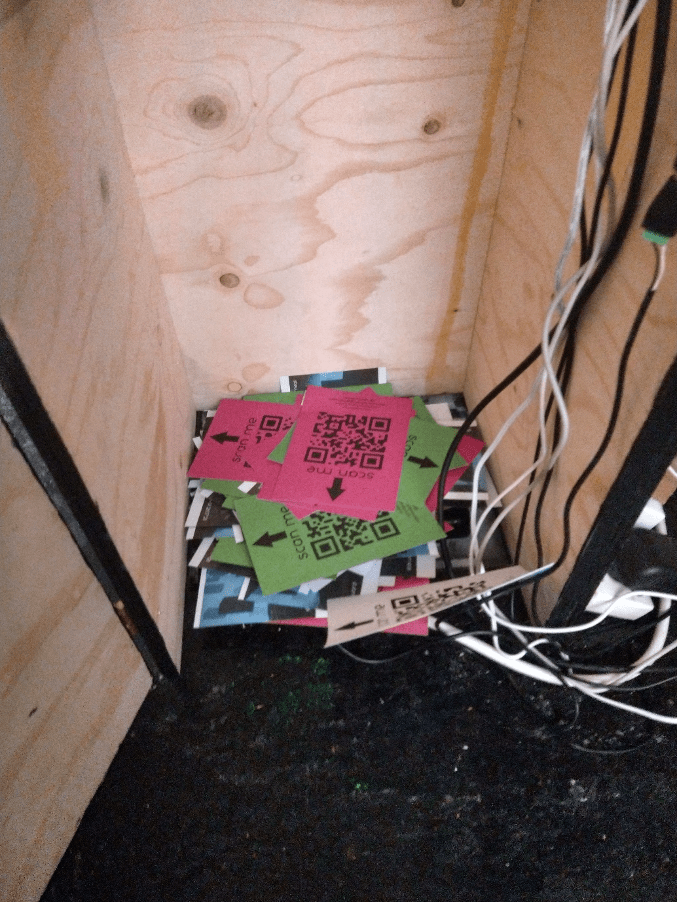

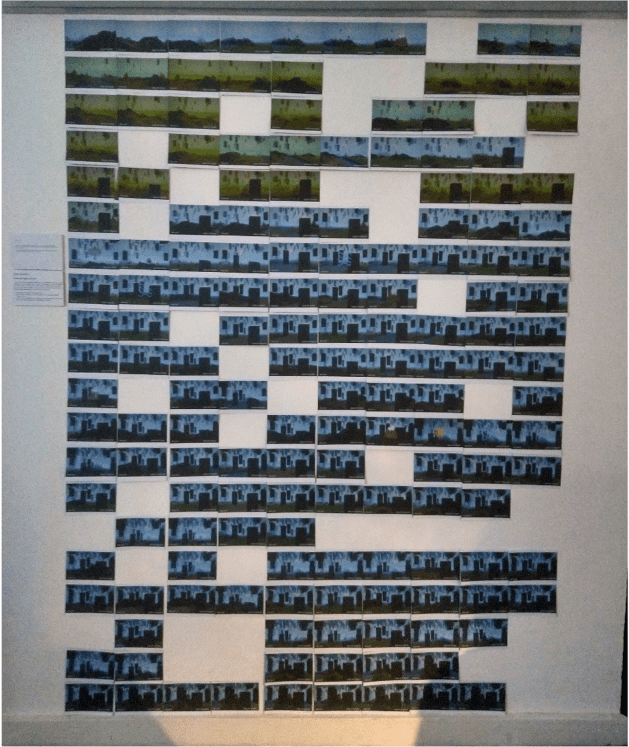

Steward is a dialog between audience and environment. Employing a procedurally generated terrain, slowly decaying over time, Steward records images of this landscape as vignettes printed out as postcards. The audience is invited to scan these postcards back into the system and hold back the decay or take the postcard with them, preserving the memory but at the cost of its further collapse.

Instructions

Preserve/Neglect/Extract

You are invited to engage with Steward as you see fit. You may wish to observe the landscape as it changes automatically or at the hands of others. You may wish to take a postcard for enjoyment or posterity. Or you may wish to directly interact with the simulated environment. For the latter, please follow these instructions below:

- Please take a recently printed postcard

- insert it QR code side up into the plinth, above the sign stating “insert here”

- When the buttons light up, press the one corresponding to the aspect of the simulated world you would like to change.

Introduction

Steward is a dialog between audience and environment. Employing a procedurally generated terrain, slowly decaying over time, Steward records images of this landscape as vignettes printed out as postcards. The audience is invited to scan these postcards back into the system and hold back the decay or take the postcard with them, preserving the memory but at the cost of its further collapse.

Concept and Background Research

Steward is an investigation into the concept of stewardship and queries the anthropocentric viewpoint that often accompanies language with regard to complex natural or societal systems.

The basic premise of the piece is this: the audience interacts with an otherwise closed system that produces a desirable resource which must be fed back into this system lest it cause the system to decay.

I have used the language of landscape to represent a dynamic system (Though it is not directly or specifically referential to any particular landscape or environment, nor is the piece singularly about the environment as a whole), something easily recognisable to a viewer. The counterfoil to this landscape are the postcards which act as the token resource produced by the landscape. Being objects with aesthetic value, a uniqueness and a direct reference to the landscape in the present state at the time of printing, the postcards thus also provide an immediacy of payoff to the audience, building desirability. With such a desirability set, it is then up to the audience to question whether they would like to take the postcard with them, and extract from the system, or to input the postcard back into the system and preserve it for future audiences.

This concept of landscape I’m referring to stems from artists such as Tony Cragg, Richard Long and Olafur Eliasson who as artists have abstracted the representation of landscapes into forms that enable audiences to recontextualize themselves within the physical and social geography of their world.

Technical Implementation

Terrain Shader

The foundation of the project would be the terrain generation that would form the underlying shape of the landscape. With a central technical requirement being dynamic creation of the landscape, I quickly realized that utilizing the GPU with shaders would be the best way of optimizing performance. The foundation for this was adapted from Sebastian Lague’s work with compute shaders and their use in terrain generation.

Compute shaders are very effective scripts that can run any arbitrary calculations on the GPU. By passing in values related to the desired size of the terrain by number of vertices, we can run a noise function written by Keijiro Takahashi over each of these vertices, and return a cohesive wave-like heightmap as a 1D buffer. Lague suggests the use of “Octaves” of noise, which granulates detail and enables different scales of noise to additively create a more natural landscape. Finally, using a “smoothstep”, I create plains between hillier regions for more visual variety and again a more natural environment. This can then be passed on to a function that will construct the mesh.

When the mesh is constructed, the heightmap values (which are floats between 0 and 1) are scaled for a more dramatic scene. This scaling follows a noise value varying between a maximal and minimal threshold, such that when the user presses the “Mountain” button, this verticality scalar also changes, along with the general movement of the terrain noise. Doing this should again provide more variety over time and make less obvious the movement of the hills across the screen as the noise effectively scrolls.

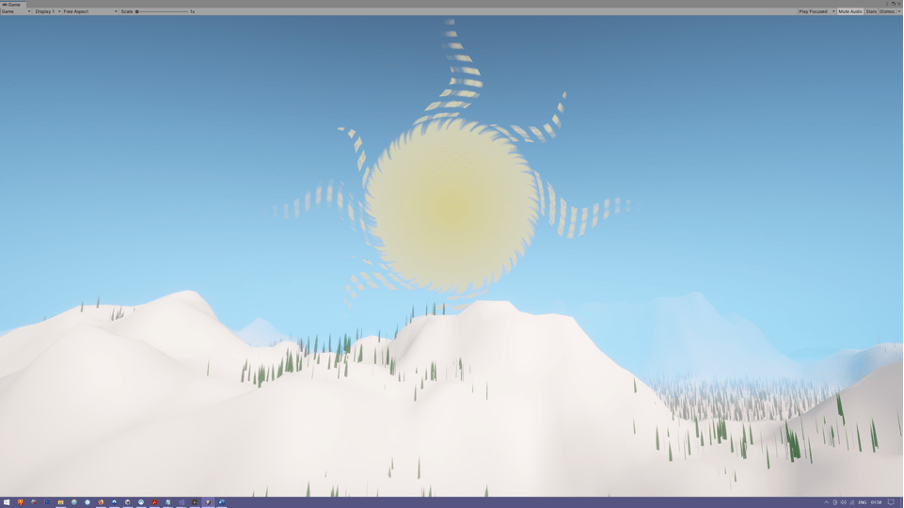

The Sun Shader

One of the four interactive elements of my landscape will be the sun and “temperature”. There is an ancient symbolic trope of the sun as having a face and being depicted with wavey rays emanating from it. I wished to replicate a somewhat symbolic version of the sun with these rays, but have them animatedly depicting the eminence with rays reaching out and growing.

Grass Placement shader

The placement shader has been very difficult to implement. In summary, the goal was to create a compute shader which would take in information about the terrain shape and the environmental parameters as they are, altered by the viewers, and use this to determine where on the landscape different features are located, e.g. grass, trees, houses.

The difficulty arises when the number of valid placements for a given mesh to be instanced is not static. I had first hoped to be able to use a compute shader to generate a 2D RenderTexture and then read from that about where valid placements could be made. Unity however cannot directly read pixels from a RenderTexture, and doing so would require transferring the data back and forth between the GPU and CPU, slowing the process down considerably, as well as the result likely requiring a Vector3 array being passed to the GPU as a buffer for GPU instancing, which again would have further slowed performance.

Unity has a function for drawing mesh instances from data generated solely on the GPU called Graphics.DrawMeshInstancedIndirect which takes in the mesh, material, bounding area and a buffer of arguments as parameters.

This arguments buffer is an array of 5 uints which refer to properties about the current mesh being drawn. The two important elements of this buffer are the first two. The first refers to the index count, i.e. the number of instances for the GPU to draw. This is passed into the buffer using the “copycount” command, which performs this calculation still on the GPU. The “copycount” is taking the length of the buffer holding the positional data for the meshes, which is passed directly to the material. For a dynamic number of meshes, the position buffer needs to be an AppendBuffer, which has only a maximum index size indicated when it is created and can grow to any length under that each frame.

I struggled getting the Append buffer working as there is not a great deal of information available on their use that I was able to find. I sought to approach the problem differently when prompted to attempt to use Geometry Shaders to solve the issue.

Geometry shaders follow the Vertex and Tessalation Shaders in the Graphics pipeline and can be used to generate new geometry primitives on the fly. I ran into two fundamental issues. Firstly, I wish to pass a number of different meshes that can be created in software such as maya, rather than writing generative scripts for each type of tree or bush etc. When using the unity functions Mesh.triangles and Mesh.vertices and passing these to material buffers, the order of triangle indices doesn’t seem to be consistent with the way the geometry shaders are drawn, see fig below. Regardless, the geometry shader (at least in unity) can generate geometry with a maximum vertex count of 256, which for generating grass is fine, however I wish to expand this system to take a variety of input meshes, of much larger sizes, without having to rewrite a bespoke shader for each one.

(Left) Tree redrawn with Geometry Shader, (Right) tree model downloaded

The leaf areas are ignored as they are above the 256 vertex maximum, and triangles are drawn in the wrong order, connecting different parts of the tree, with inconsistent normal creating holes.

https://www.turbosquid.com/3d-models/tree-pixel-low-poly-3d-model-1764347

Eventually, I managed to get the AppendBuffer working with placing the grass around, but was unable to properly implement LODs with the system, though this had a negligible effect on framerate.

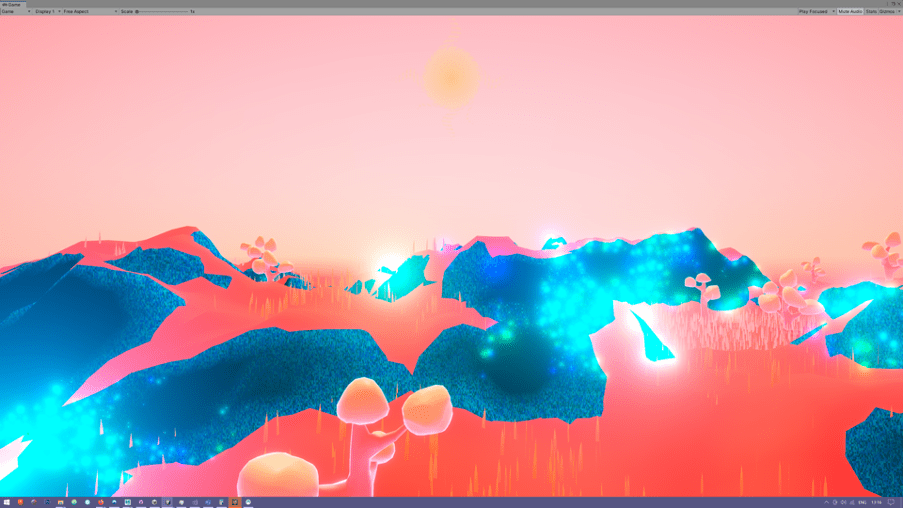

The Atmosphere Post Processor

Creating a custom post-processing script in unity URP is a bit convoluted, requiring the creation of a custom post-processing renderer and pass to give the settings to. I followed Sebastian Lague’s tutorial on creating atmospheric scattering and adapted it to my non-spherical world.

Adding Trees

With Grass placement just about working, I decided to just use GPU instancing to randomly place trees around rather than creating a buffer and using the same compute shader system to power it. Initially I used a dummy tree from TurboSquid before replacing them with low poly models of my own. And eventually with high poly models from TurboSquid when I realised the aesthetic of my textures would suit it better.

Terrain Textures and Adding Decay

Interesting texture glitch when writing the atmospheric post-processing shader

I wrote a shader to give a bit of life to the terrain by using different textures under certain conditions, but initially had an error where the textures were just appearing as a solid colour. Later I discovered I hadn’t been assigning the uv texture coordinate correctly, meaning all the textures were being read from 0,0.

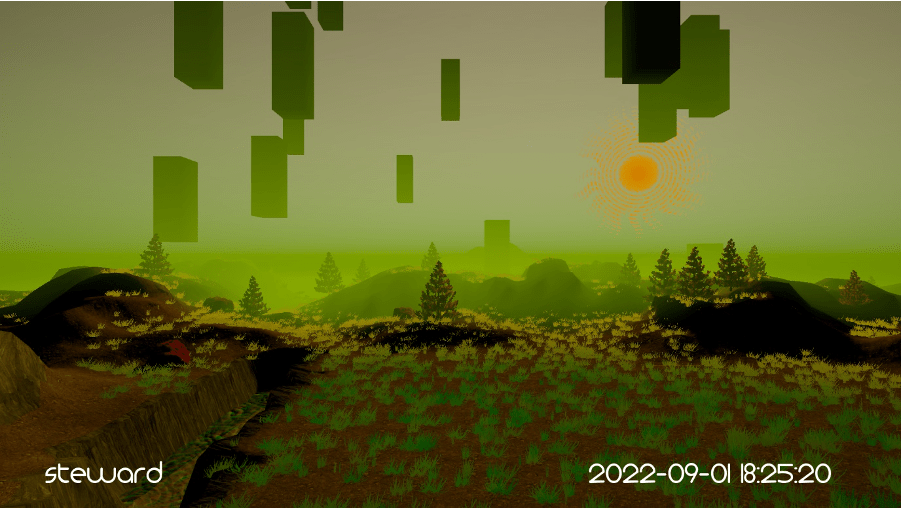

The plan for decay had been to use human settlement as an indicator, however I thought that this would both be too difficult with the time available and would be too literal in reference to the climate whereas I wanted the piece to act as a system and be about interaction with systems in general.

With that, I changed to using a black cube to represent a missing area of the world which felt alien and digital in terms of its appearance.

Final Trees

Final Monolith Design and Timestamp

I changed the monoliths to be reflective of the shape of the button plinth and give a visual link between the physical installation and the digital one.

I added a timestamp in the python script used to print each screenshot to provide a uniqueness and an identifier to each postcard individually.

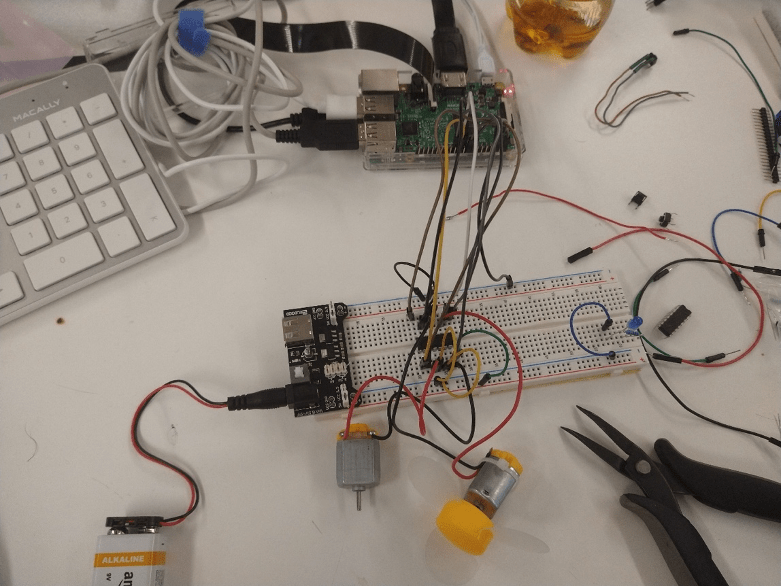

Physical Installation

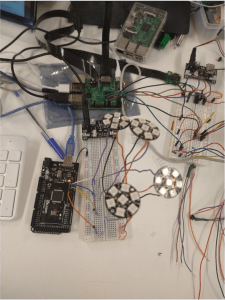

The physical installation was to consist of a plinth with 4 buttons corresponding to the four parts of the unity that would take in the postcards, scan them for the QR code and then allow the user to push the button and change what was on screen.

The first part was to create the system to take the postcards from the user, which involved a DC motor. The small motors that come in the Arduino kit proved to have too little torque, so I used a 12V DC motor and 3D printed a mangle, e.g. two counter-rotating cylinders that would pull the postcard away from the user to build in the sacrifice.

The four Symbols for my buttons.

The buttons were 3D printed in black with a translucent “natural” PLA to allow light to shine through and illuminate the symbols. A cross underneath enables these larger movable sections to press the standard small push buttons, and the whole system stands on springs to give resistance and pushback.

The illumination is dealt with by Adafruit Neopixel Jewels, circular arrays of 7 neopixels that can be individually addressed, allowing me to assign different colours to different parts of the buttons reflecting the colours in the symbols.

When glued together, the buttons were sanded down and given a matt acrylic varnish.

Reflection and Future Development

This is by far the largest project I’ve ever undertaken and whilst I think I managed my time well with regards to completing the project, I think it could have been helped with a more formalised organisational system to give a better work/life balance.

Having never used shaders or compute shaders before, I found it an incredibly valuable and challenging experience and have learnt an incredible amount, especially regarding when it isn’t worth using them. Much of the graphics-based issues faced may have been alleviated if I’d used the Unity Shader Graph from the beginning, producing equal or better results, but I enjoyed the process of learning how they work.

Compute shaders with the grass placement was also probably a bit overkill for what I was trying to do, however again this is a position that can only be reached in hindsight where I have a better understanding of the processes involved.

On the physical side of the installation, I ran out of time to make the project totally robust and neat, but was able to address many of the concerns regarding the intuitiveness of its interaction and received many comments about the attractiveness of the buttons I was able to make, which I had known from the start would be a focal point of the project as the primary method of interaction.

Working with the Raspberry Pis GPIO outputs was another notch I had wanted to add to my belt, so being able to do the majority of the physical computing on the Pi was very helpful as a learning experience.

For future development I am going to rework the insides of the button plinth, making it more solid and robust, rather than held together with tape and cardboard as it was for the exhibition. I also will address the couple of times people were able to shove cards above the rollers causing the system to jam.

As the piece develops over a period of time it is important for me to conceive of how the story is presented to the audience during the course of an exhibition. I used the previously fed in postcards from the opening night on the wall for this, but this led people to taking those postcards and feeding them into the system which I hadn’t wanted and caused issues due to the sticky tape.

For the simulated world itself there are many realisms that could be added. Introducing hydraulic erosion and maybe a non-moving but slower developing landscape could be directions to take the work in, as well as trees/shrubs/grass growing in place, dying, and falling over and a more realistic water simulation. I think it would also be interesting to develop these but in a way that does not suggest climate simulation, but an evocation and to keep it being about a system and not a chauvinistic attempt at “simulating” the natural world.

References

| Acerola. 2021. How Do Games Render So Much Grass?. Video. https://www.youtube.com/watch?v=Y0Ko0kvwfgA. |

| AI and Games. 2022. How Townscaper Works: A Story Four Games In The Making | AI And Games. Video. https://www.youtube.com/watch?v=_1fvJ5sHh6A. |

| Ameye, Alexander. 2021. “📝 Custom Render Passes In Unity”. Blog. Alexanderameye.Github.Io. https://alexanderameye.github.io/notes/scriptable-render-passes/. |

| Boeing, Adrian. 2011. “Twist Effect In Wbgl”. Blog. Adrian Boeing: Blog. http://adrianboeing.blogspot.com/2011/01/twist-effect-in-webgl.html. |

| Brain, Tega. 2018. “The Environment Is Not A System”. A Peer-Reviewed Journal About 7 (1): 152-165. doi:10.7146/aprja.v7i1.116062. |

| Eglash, Ron. 2007. “The Fractals At The Heart Of African Designs”. Presentation, TED, , 2007. |

| Game Dev Guide. 2020. Getting Started With Compute Shaders In Unity. Video. https://www.youtube.com/watch?v=BrZ4pWwkpto. |

| Golden, Tim. 2007. “Tim Golden’s Python Stuff: Print”. Timgolden.Me.Uk. http://timgolden.me.uk/python/win32_how_do_i/print.html#shellexecute. |

| Guerin, Eric. 2022. “Github – Eric-Guerin/Gradient-Terrains: Code Source Of The Paper “Gradient Terrain Authoring” – EG 2022″. Github. https://github.com/eric-guerin/gradient-terrains. |

| Gumin, Maxim. 2022. “Github – Mxgmn/Wavefunctioncollapse: Bitmap & Tilemap Generation From A Single Example With The Help Of Ideas From Quantum Mechanics”. Github. https://github.com/mxgmn/WaveFunctionCollapse. |

| Helland, Tanner. 2022. “How To Convert Temperature (K) To RGB: Algorithm And Sample Code”. Tannerhelland.Com. https://tannerhelland.com/2012/09/18/convert-temperature-rgb-algorithm-code.html. |

| Jukes, Matt. 2021. Feelscape. Digital. London: https://feelscape.art/. |

| Kisielewicz, Kornel, and Andreas Brinck. 2012. “How To Determine If A Point Is In A 2D Triangle?”. Stack Overflow. https://stackoverflow.com/questions/2049582/how-to-determine-if-a-point-is-in-a-2d-triangle. |

| Lague, Sebastian. 2020. Coding Adventure: Atmosphere. Video. https://www.youtube.com/watch?v=DxfEbulyFcY. |

| Lague, Sebastian. 2022. “Github – Seblague/Hydraulic-Erosion”. Github. https://github.com/SebLague/Hydraulic-Erosion. |

| Millar, Chance. 2022. “Unity-Built-In-Shaders/Skybox-Procedural.Shader At Master · Twotailsgames/Unity-Built-In-Shaders”. Github. https://github.com/TwoTailsGames/Unity-Built-in-Shaders/blob/master/DefaultResourcesExtra/Skybox-Procedural.shader. |

| Ned Makes Games. 2020. Grass Fields In Unity URP! Generate Blades With Compute Shaders! ✔️ 2020.3 | Game Dev Tutorial. Video. https://www.youtube.com/watch?v=DeATXF4Szqo&t=1178s. |

| Ned Makes Games. 2020. Intro To Compute Shaders In Unity URP! Replace Geometry Shaders ✔️ 2020.3 | Game Dev Tutorial. Video. https://www.youtube.com/watch?v=EB5HiqDl7VE. |

| Ned Makes Games. 2021. Blade Grass! Generate And Bake A Field Mesh Using A Compute Shader ✔️ 2020.3 | Game Dev Tutorial. Video. https://www.youtube.com/watch?v=6SFTcDNqwaA. |

| Ned Makes Games. 2021. Generate A Mesh Asset Using Compute Shaders In The Unity Editor! ✔️ 2020.3 | Game Dev Tutorial. Video. https://www.youtube.com/watch?v=AiWCPiGr10o. |

| Paul, Abhishek. 2022. “Nature Assets Pack 1 3D”. Turbosquid. https://www.turbosquid.com/3d-models/nature-assets-pack-1-3d-1843708. |

| python, How, Nadav Kiani, Dorijan Cirkveni, and Billy Bonaros. 2022. “How To Check For New Files In A Folder In Python”. Stack Overflow. https://stackoverflow.com/questions/57840072/how-to-check-for-new-files-in-a-folder-in-python. |

| Stan, Roy. 2019. “Grass Shader”. Blog. Roystan.Net. https://roystan.net/articles/grass-shader.html. |

| Weber, Matthias. 2015. “Bryce 3D (An Epitaph)”. Blog. The Inner Frame. https://theinnerframe.org/tag/eric-wenger/. |

| “3D Xfrog Plants Honey Locust – Gledista Triacanthos”. 2022. Turbosquid. https://www.turbosquid.com/3d-models/3d-xfrogplants-honey-locust-gledista-triacanthos-1734005. |

| “Coconut Palm 3D Model”. 2022. Turbosquid. https://www.turbosquid.com/3d-models/coconut-palm-3d-model-1754588. |

| “Compute Shaders”. 2020. Blog. Catlike Coding. https://catlikecoding.com/unity/tutorials/basics/compute-shaders/. |

| “Computeshaders With Multiple Kernels”. 2014. Forum.Unity.Com. https://forum.unity.com/threads/computeshaders-with-multiple-kernels.222760/. |

| “Custom Post Processing In Unity URP”. 2022. Blog. Febucci.Com. https://www.febucci.com/2022/05/custom-post-processing-in-urp/. |

| “Github – Adafruit/Adafruit_VCNL4010: Arduino Library For VCNL4010 Sensors”. 2021. Github. https://github.com/adafruit/Adafruit_VCNL4010. |

| “Graphics.Drawprocedural”. 2020. Blog. Ronja’s Tutorials. https://www.ronja-tutorials.com/post/051-draw-procedural/. |

| “How To Sample A Texture In Vertex Shader”. 2018. Forum.Unity.Com. https://forum.unity.com/threads/how-to-sample-a-texture-in-vertex-shader.513816/. |

| “Intro To Shaders”. 2021. Blog. Cyanilux. https://www.cyanilux.com/tutorials/intro-to-shaders/. |

| “Looking Through Water”. 2018. Blog. Catlike Coding. https://catlikecoding.com/unity/tutorials/flow/looking-through-water/. |

| “Pine Tree Model”. 2020. Turbosquid. https://www.turbosquid.com/3d-models/pine-tree-model-1508559. |

| “Shadow Attenuation Issue On URP Spot Light In Custom Lighting.”. 2020. Forum.Unity.Com. https://forum.unity.com/threads/shadow-attenuation-issue-on-urp-spot-light-in-custom-lighting.928908/. |

| “Texture Distortion”. 2018. Blog. Catlike Coding. https://catlikecoding.com/unity/tutorials/flow/texture-distortion/. |

| “Textures For 3D, Graphic Design And Photoshop!”. 2022. Textures.Com. https://www.textures.com/download/3DScans0133/132389. |

| “Textures For 3D, Graphic Design And Photoshop!”. 2022. Textures.Com. https://www.textures.com/download/3DScans0869/141752. |

| “Textures For 3D, Graphic Design And Photoshop!”. 2022. Textures.Com. https://www.textures.com/download/PBR0830/139432. |

| “Tree Pixel Low Poly 3D Model”. 2022. Turbosquid. https://www.turbosquid.com/3d-models/tree-pixel-low-poly-3d-model-1764347. |

| “Treepack With 3 Trees 3D Model”. 2022. Turbosquid. https://www.turbosquid.com/3d-models/3-trees-3d-model-1538515. |

| “Using Rwtexture2d<Float4> In Vertex Fragment Shaders”. 2022. Forum.Unity.Com. https://forum.unity.com/threads/using-rwtexture2d-float4-in-vertex-fragment-shaders.531872/. |

| “Waves”. 2018. Blog. Catlike Coding. https://catlikecoding.com/unity/tutorials/flow/waves/. |

| “Writing Shader Code In Universal RP (V2)”. 2021. Blog. Cyanilux. https://www.cyanilux.com/tutorials/urp-shader-code/. |

| “(Shader Library) Swirl Post Processing Filter In GLSL”. 2022. Blog. Geeks3d. https://www.geeks3d.com/20110428/shader-library-swirl-post-processing-filter-in-glsl/. |

Leave a comment