Classic pitch: The world is dying, lets spread the word of how amazing nature is to raise awareness and save it.

New twist: You never get to see the natural world.

The future: Nature will forever be a fiction, and now it will be mediated to you through AI.

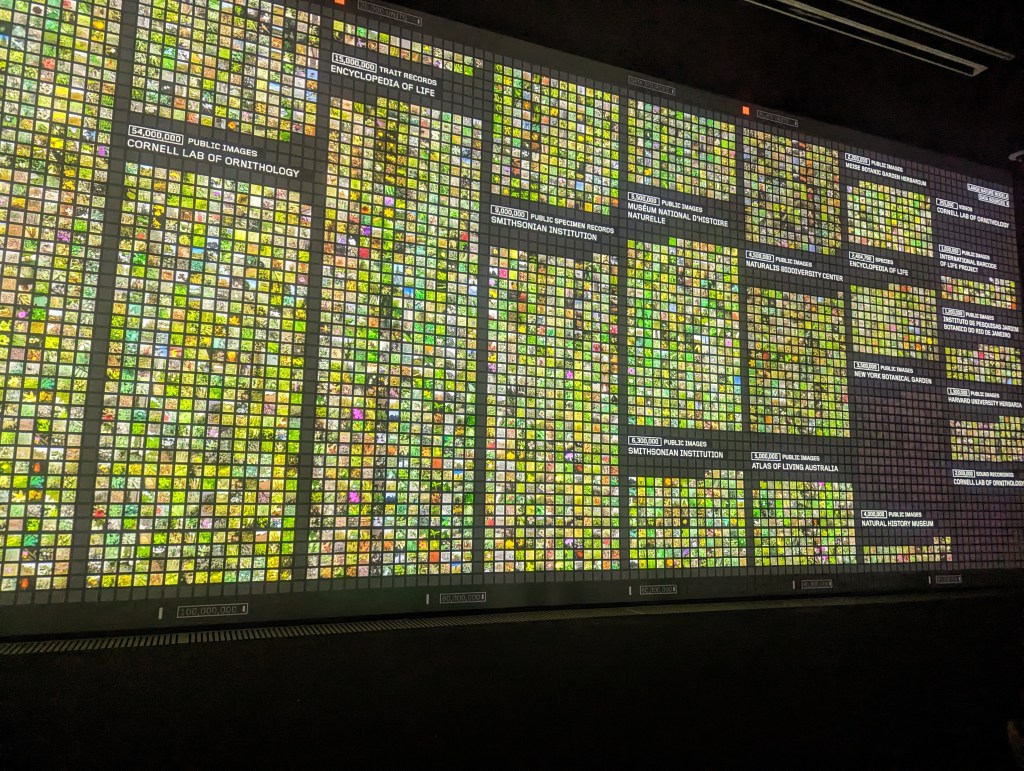

Refik Anadol Studio has created one of the most fantastic artworks of the century. But it was not on display at the Serpentine. If you add up the number of images from the various “openly accessible” sources mentioned in the show “Refik Anadol: Echoes of the Earth: Living Archive”, they total over 240 million. If Refik Anadol Studio has been able to collate this many images and use them to train a “Large Natural Model”, they have performed an incredible piece of documentary and archival work. If it were open to the public, made accessible, and for free, it would be an immense gift to humankind. There are many barriers to something like this being made freely available of course, archives aren’t cheap to source or maintain, but nonetheless, the collation of something such as this is astonishing.

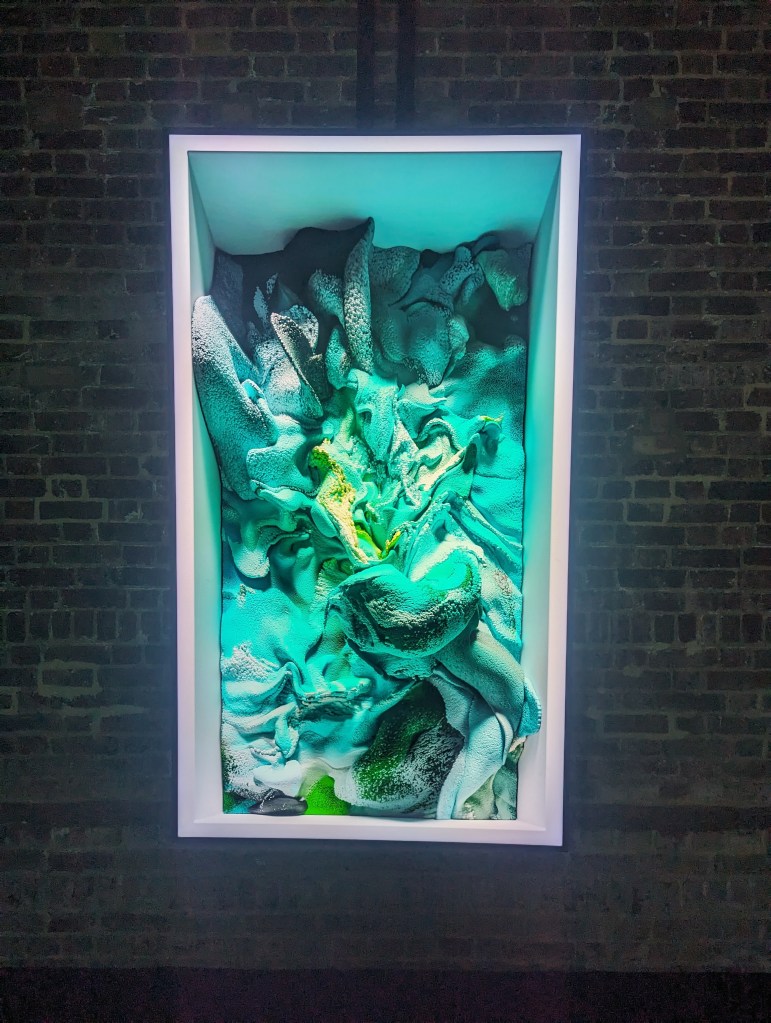

Echoes of the Earth: Living Archive, showing at Serpentine Gallery North is precisely not that: A living archive. Wrapping around the outer wall are projections looping through Anadol’s trademark flowy balls, AI generated terrain as if seen from above, balls again, AI generated rainforests, more balls, AI generated corals, balls once more, AI generated birds, and then, after some balls, a visualisation of the vector space of the dataset. Or part of the dataset. There were also some AI flowers in there too. Nowhere here is there a living archive. A living archive is one in flux, accessible, readable, made alive and available through use, through being an archive, not merely a storage dump for information. If this archive is not accessible, it is not alive.

What an AI model trained on an incredible archive of data is, I can’t quite say exactly. Emily Bender called Large Language Models “Stochastic Parrots”, and used in the way of this show, the Large Natural Model is just that. (Especially fitting given the subject matter of rainforests). But contrary to the metaphor we are directed to run with, this model is certainly not alive, and the products it creates are certainly not “nature”.

AI synthesised images of the “natural” world are a double-layered fiction. The primary comes from the dataset, the fiction in images. No image is the truth, all are editorialised; how a shot is composed, additional lighting, the cropping of images, staging, time of day, type of lens, type of sensor, post processing, the list goes on. Even the accidental photo you took of your startled face in the reflection of the window as you dropped your phone when a pigeon flew past is a composite of these editorial decisions, despite you not intending to make them. An image carries a situated perspective. For those in Refik Anadol’s dataset, these will be the perspective of specimens on a neutral (colour-wise, this would carry the signifiers of a scientific archival environment) background with a colour and scale chart, or photographs and lidar scans from and of particular places in rainforests, near or away from paths, in areas with or without light, taken from the head height of a 6 foot tall PhD student with the highest quality lens available, or from the head height of an armadillo by a trap-camera as said armadillo waddles by on the trail of some food. All of these are worthy images, that would say much about the world around us, and give us clues and understanding of its wonders, but they are none of them “truth”. Images hide within them the story of their creation, and (when digitised) are no more than numbers in an array, from which we make inferences about the world. We remember our experience and learn from images, but this occurs within our heads and are not present in the image itself, in short the image as a sensory loop, is a fiction.

The second layer of fictioning is within the AI model and its syntheses. You do not need me to tell you that giant mushrooms 30ft tall with glowing gills like lampposts do not exist. But, aside from low quality concept art, perhaps it does need exploring why an AI image of an African Swallow is not an image of an African Swallow. It may be illustrative, but there is no root truth to this illustration, it will forever be derived from a space built of all its input images together. An illustrator would be making choices to include or not details of the bird, and capture or perhaps fabricate distinctive features of the individual, but do so as choices. The root of the ick of AI images is their presentation as heralding some truth to them. That the seamless transition between them as they morph is just entertainment, it is a complete look-alike, constantly showing something ab-natural. The petals of the flowers continuously cease to make sense. To a flower, this isn’t simple decoration, it is structure, it is advertisement, it is sexual in both the sensual and reproductive sense. There is structure and purpose to a flower folding, the culmination of billions of successful evolutionary accidents, and it does a disservice to the natural world to reduce this to an essential “floweriness” that takes only the abstract perspectival visual of folded coloured velvet sheets by means of raw computational averageness of images of flowers.

My question at the heart of this exhibition is why is the AI being used to synthesise anything at all? Corals are cool, show me some whacky ass anemone, hit me with a cordyceps and then a reishi and then an oyster. The diversity of nature is expansive beyond human comprehension, and it is a wonder to itself. To have a clutch of images such as Refik Anadol Studio has managed to amass to train this model, is all the wonder of nature at the activation of a database. There’s probably 25-ish screens in the show, and 240-250ish million images in the collection. Each screen showing 1 image every 0.1s (the blink of an eye) would take 289 days by my estimate to show them all. That’s a lot of data, completely unmanageable, incomprehensible by any human. What sort of entity could make inferences connecting various bits of data within an archive so large? I know! An AI!

To me, it seems ludicrous to have taken this data and then obfuscate it behind a wall of generated imagery and call it a living archive of nature. I read a tweet recently that I’ll paraphrase here: AI taking over isn’t a bad thing, it’s just about direction: I want AI to take over doing my laundry and wash the dishes while I focus on art and writing, I don’t want AI take over art and writing so I can do my laundry and wash the dishes. We don’t need AI lenses to view the world for us and vomit out an idealised average of it. We need to be aware of the world as it is, in its breadth and enormity and its fragility. How glorious would Refik Anadol Studio’s model be if it showed us connections between different images, showing the similar patterns that appear throughout nature. Or built chains of images that show us through an ecology via trophic levels, or the interconnectedness of different beings that exist in different niches in a geographical space. I think about Google’s bird sounds experiment which compares thousands of birds by the visualisation of their calls. This is a tool of realism, and education, using machine learning to provide a map through recorded attributable data, explorable in a way that is fun and immediate. The natural world is a fantastic painter and sculptor, creates dramas and compositions that match anything we can dream up. And when we do dream up fantasies we can view that an expression of individual, a distillation of culture and nature through the hand of an artist, a person, an empathetic node we might seek to inhabit. These visions are situated, with the bias and flaws associated, because they are something of the human allowing us to investigate a fellow human, develop our empathy toward their position, and grow ourselves through this shared moment.

I am interested in what the machine dreams, but these aren’t electric sheep. To a computer, an image is that series of numbers. Any series will do, white noise or Velazquez, they are of equal hierarchy. To us, we have a hierarchy of recognition, the things that inhabit our world, or patterns that excite us and allow our mind to play on their surface. With a diffusion model, an embedding, an AI is presented with hierarchies of connection between those random numbers and what an output “vibes” like. It is not dreaming, it is mathematising. The instructions might be bad, the dataset might be holey, or it might be asked to play looser not to overfit, but I wouldn’t consider this a dream.

What delights me about the prospect of AI is how it can make connections that I couldn’t. If we were scrolling through a stream of mushrooms and then suddenly a duck unexplainably pops up, this would be a dream, a hallucination, a connection that is purely mathematical. The dream is the unintended connection, but it isn’t enough on its own.

We are likely all familiar with experiences taking over the art-space, installations, immersion becoming the growth medium of sculpture. Images, objects are cheap. I think this in some part has to do with the growth of artists like Olafur Eliasson, Mike Nelson. Big screens fill repurposed garages in central london. There is an intangible we want to reach more accessibly than through a painting because the flat, unmoving canvas presents a challenge unique to modernity, the oversaturation of images, the over stimulation of our eyes. None of this is to say painting is losing its draw, but I often find painting shows to be boring, drab, self-absorbed affairs. I love paintings but often they all look the same, crafted to be repackaged on Instagram, flat and lifeless or thick … and lifeless, hyperreal or semi-real or painted by robot or assistants trying to be robots. When we stand in an installation it is a vibe, something to feel, to be captured by or unnerved (Sontag would be proud). The wonder of the real that is more open to touch, a wonderland to explore, the realisation of virtual reality but not mediated through a screen.

And then we return to installations in garages in central London, made of big screens that follow the viewer, or play an animation on loop, or continually generate evolving outcomes. Impressive cus big. Impressive cus dark space. Impressive cus not white cube. Impressive cus rave-lite. Colourful. And Refik Anadol fits right in, so perfectly into the “what images can go on screens and be big in a place?”, place. There is for sure technical mastery at play, this isn’t up for question, but it doesn’t do anything past the first time you saw it on Instagram or in a basement. Each is the last, more so than a painter locked into a style, because you know these balls are a parameter apart, a few clicks and a render, press play on the projector. The wonder and novelty is a major draw, these are highly stimulating artworks, and the rhythmic flowing screensavers really keep you guessing whats going to come next.

But with this show we were treated to this screensaver interwoven with AI landscapes and generated blandness presented as itself on its face with no further substance than “Look its like that planet you live on”. Where’s the doubt? Where is the crippling fear? I bet there are many species within the dataset collected that have subsequently gone extinct. Not to even mention the ecological effect of the compute required to train and create such a model. Let’s think about this for the moment. Here we have an artwork, content to regurgitate crossfixations made by mathematical proxy, with no human direction, that has at its core documentation of the world being lost, and it doesn’t care to bring that to light, but rather fantasise idly upon it. There’s no fantastic beasts and strange chimera, there’s no speculative biology, no potentials on display. There is no future here. Only the captured present and lost recent past. No potential. No future for you.

Leave a comment