What is life? Baby don’t hurt me, don’t hurt me, no more. This is what I imagine a comedically inspired machine intelligence would say when attempting to assert its consciousness to a sceptical human observer. It’s an ethical question that hints towards the current topic of discussion. To show intelligence, a machine would have to imitate life in some manner, bring in stimuli and outputting something. But it wouldn’t survive an assault by MRS GREN. The uncomfortable idea of a machine consciousness is the inability to deny livelihood to something so alien. It wouldn’t be made like us, would perhaps therefore be unlikely ever to think like us, and in all likelihood would consider the “it” of itself to be something fundamentally different to the “it” we perceive it as.

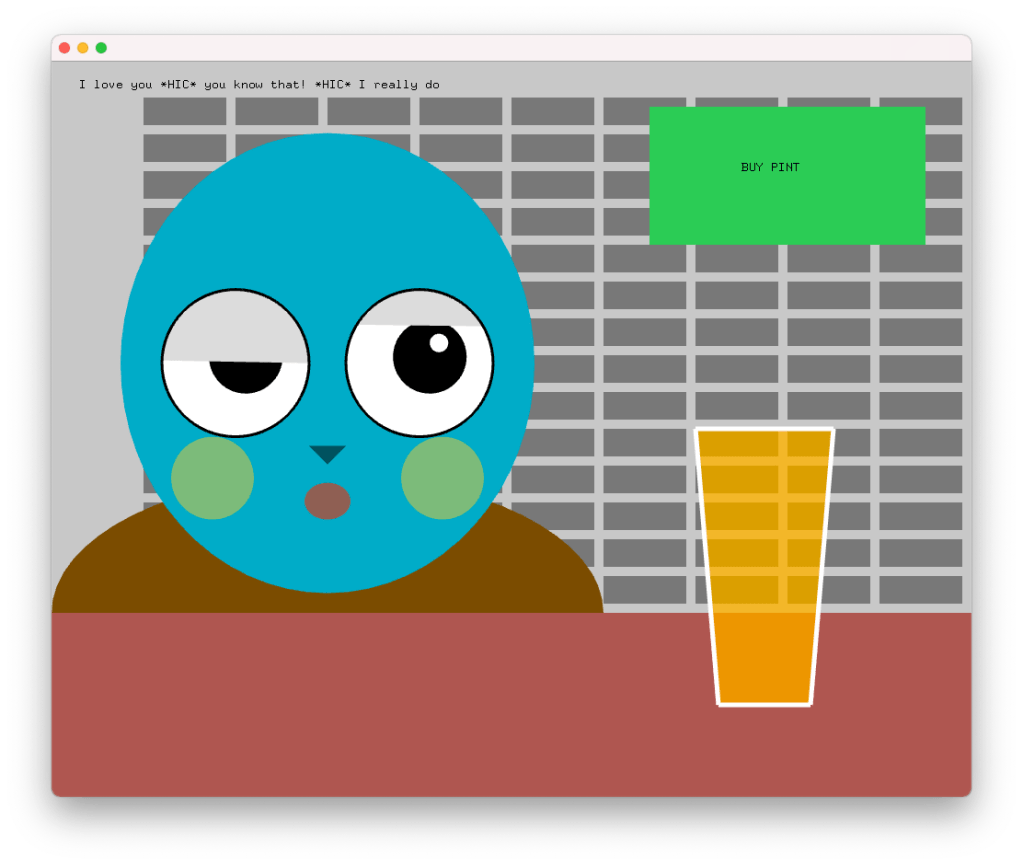

But for Turing, it would pass for intelligent. The notion of passing in this context was used by Turing to describe the Imitation Game, where an interviewer, unable to see the interviewees would be asked to tell from textual interactions only the gender identity of whom they were talking to. The term has an older use in queer communities, wherein to pass is to adequately assume (to an observer) the characteristics of the identity one wishes to be perceived as. Brought into the computational realm, this was the foundation of the famous Turing test, whereby one of the interviewees is replaced here by an AI and the interviewer is tasked to determine who in fact is the robot.

Turing argued that this is all one should need to determine the validity of a claim of intelligence or personhood or any other identity. The empiricist perspective of the matter takes the whole fabric of identity as a performance and a performative matter, the degree to its success lies only within the complexity of the role and the capability of the player. In this sense, any sufficiently advanced intelligence may only be feeding back a series of subroutines that would give a deterministic answer to any given problem, however the sufficiency of its answers to fool us is all that matters.

We can’t look under our own bonnets and see the consciousness floating around our own brains, so why assume we shall be able to do so within a computer? A consciousness could perhaps be as the algorithms described last week, the thing and the whole of the thing; an identity with no centre.

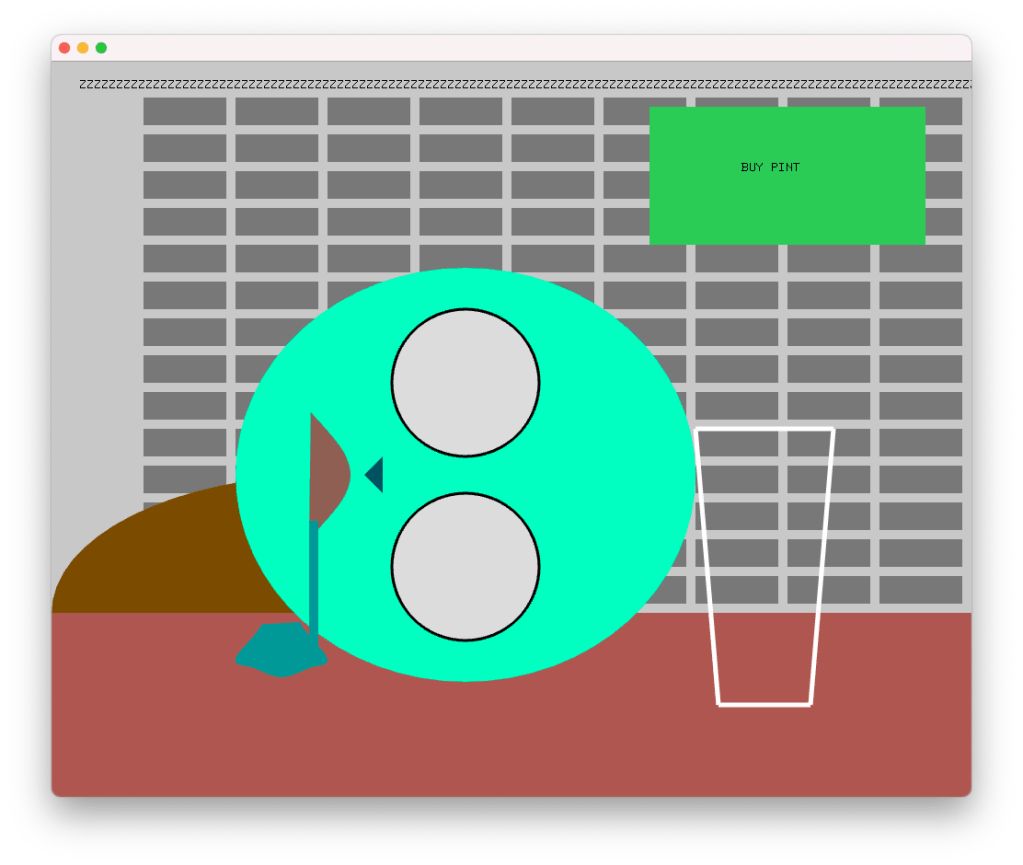

In this way we get back to material specificity, and a further question; should we be looking to create an AI in our own image, or will intelligence only be manufactured when we attempt to engage in the manner with which computers are most adept at thinking? (Now, I just want to preface this by saying I have no idea what current research into AI really looks like or what way it relates to human modes of thinking other than something something neural network.) But there was a cool thing mentioned in class, “machines learned to fly when we stopped imitating birds” – (the Hegelian adequacy comes back to bite us from last week with a wink and a nod almost as if it is a planned series of lectures with information drip fed to build connections throughout the course, who’d’a thunk it?).

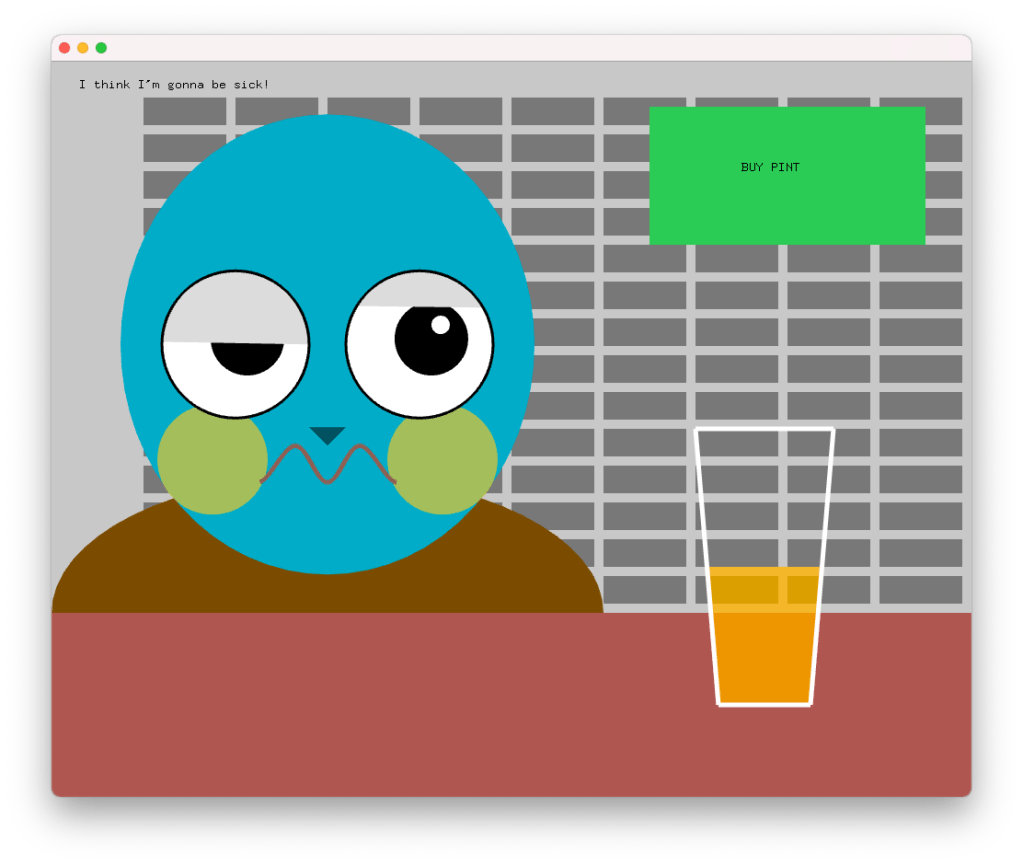

Perhaps our issue is getting machines to talk and act like us before they can talk, and act adequately like themselves. These are beasts of steel and silicone after all, not carbon and water such as we.

The embodiment of intelligence was very eloquently measured in the Descartes’ text we were given before class, before he so injuriously adds a caveat at the end to separate us noble men from the land of animalia. He describes how an automaton of a dog could be made so indistinguishable from a dog as to be one and the same, that as animals have an intelligence rooted within the organs of their bodies, they are therefore limited in their capacity to talk to us. But that we, being able to talk to each other, would be able to see through a perfect human automaton as it would not be able to put words in new orders to correspond to the meaning of something it its presence.

I believe that this is a dishonest move, as he has argued the position of all the animals and kept one separate to connect them to God as a special version of all His creatures. Had he been writing after Darwin, perhaps he’d have come to a different conclusion, but I suspect the conclusion was written before the argument. I agree with his earlier assertions of the text, we would be unable to determine a dog automaton from a dog, as we have no experience of being a dog. But equally, I have no experience of being someone else, so a sufficiently advanced human automata would probably do the same trick. Beyond this there is the notion Descartes talks of the bodily development of intelligence, a position I have a great deal of time for (as you can see referring back to adequacy). We bring the experience of the world to our processing brain and through our body we enact its proclamations and then review the results. We are creatures that think through our bodies, our ability to manipulate objects and tools, I find it impossible to imagine that an entity that evolved with a different way of experiencing the world, (e.g., with different eyes or ears or noses, like, say a dog would) would have a similar way of thinking to our own. And I believe this is something the field of linguistics explores; how different languages alter the way in which people approach and perceive the world.

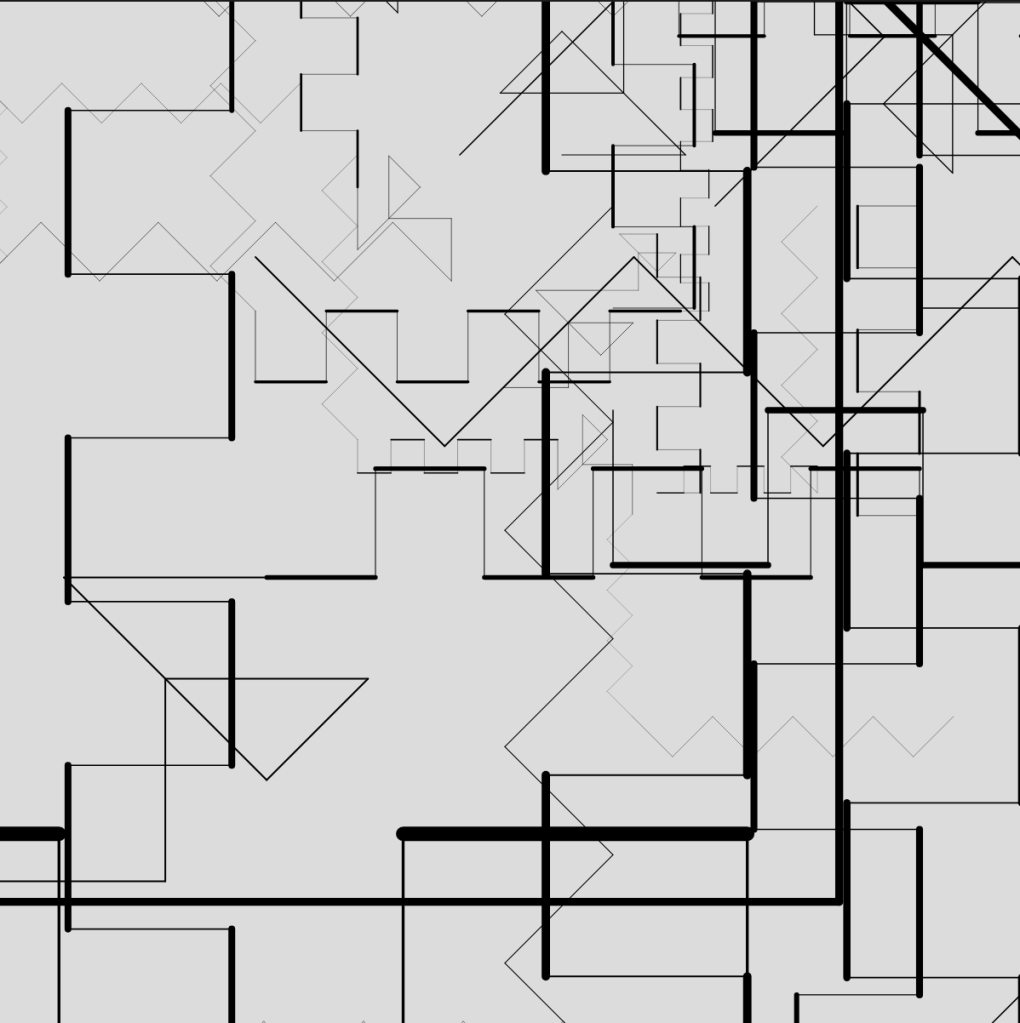

This weight of bodily experience is reflected in the world we have created for ourselves. The inferences we draw from novel objects, that we call “intuition”, is the given experience of a body language that has developed and been written since the first tool was crafted. Before then even, but the development of our artificial resource for storing information and energy begins with the first tools. The grand scale of that is reflected through our colonisation of an entire planet. As individuals we do not learn to think in isolation, but through collaboration with our peers and our ancestors. And it is here that I think we find a sticking point with the development of computational intelligence. There is a phenomenal amount of data that the human species had to process to get to the point of writing, which is to say the point of fundamentally representing the world in a way that is completely abstracted from human experience. We are feeding crazy amounts of data to machines, and at an incredible rate but interacting with a human, on human terms, is, for a machine, an abstract task.

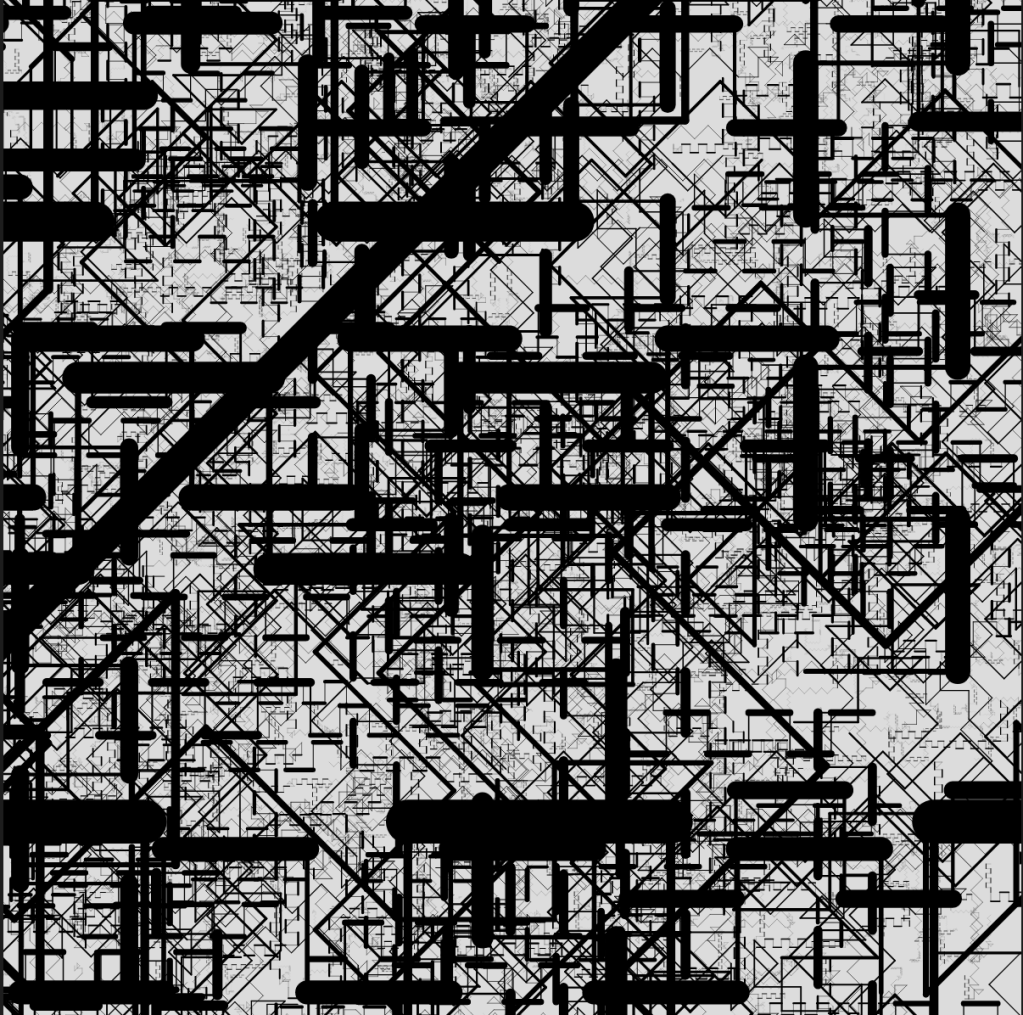

This data or information or energy that I refer to as being stored in or shared by human history and objects isn’t simply the mathematical forms that represent knowledge or understanding. But in the sense of the computer, are referenced in the Anatomy of an AI system (https://anatomyof.ai/img/ai-anatomy-map.pdf) as the wealth of labour, energy and resource given to building the computers, creating the data, generating power to run the machines and the distribution platforms. The infrastructure that allows all this to happen. The entire global capitalist system is called upon to create and run these tools. When the energy output of this structure into AI development matches that of the tens of thousands of generations it took humans to attain a level of consciousness, then I believe we shall see something akin to a conscious mind.

Leave a comment